spaCy allows to augment the model-based named entity recognition with custom rules. I found that the documentation on this is a bit lacking (https://spacy.io/usage/rule-based-matching#entityruler) cross-references.

Here I would like to gather some links and hints for working with custom rules.

The example I use throughout is labeling US-style telephone numbers (e.g. “(123)-456-7890” or “123-456-7890”).

Tests

My first recommendation is to add a lot of tests for your use case. When you download a new model, add another custom rule to the tokenizer or just upgrade spacy, things might break. The differences in named entity recognition between the provided small, medium and large English models are significant.

So we aim for tests in the style of:

texts = [("This is Fred and his number is 123-456-7890.", 1),

("Peter (001)-999-4321 is the big Apple Microsoft.", 1),

("Peter (001)-99-4321 is the big Apple Microsoft.", 0)]

for text, num_tel in texts:

doc = nlp(texts)

self.assertEqual(len([ent for ent in doc.ents if ent.label_ == "TELEPHONE"]), num_tel)

Phrase patterns vs. token patterns

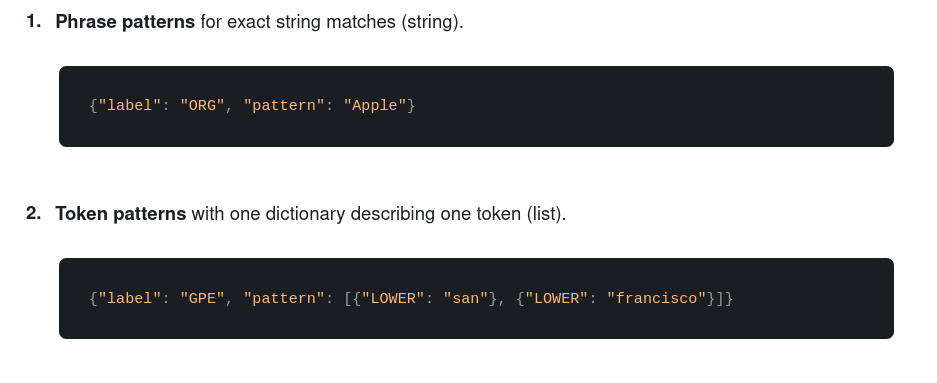

The documentation describes phrase- and token patterns here https://spacy.io/usage/rule-based-matching#entityruler. What might be misleading here is that the example for phrase patterns uses a single word.

The point here is that for a phrase pattern you can match arbitrary text spanning multiple tokens with a single pattern, while for token patterns you specify a list of patterns with one pattern per token. Example:

# phrase pattern

pattern_phrase = {"label": "FRUIT", "pattern": "apple pie"}

# similar token pattern

pattern_token = {"label": "FRUIT", "pattern": [{"TEXT": "apple"}, {"TEXT": "pie"}]}

Note that those two are not completely equivalent. The token pattern is dependent on the tokenizer. Which means if the rules of the tokenizer change, the pattern might not match anymore. At the same time the token pattern also matches “apple pie” (multiple whitespaces between the words).

For our example it’s obvious we will have to use token patterns because phrase patterns allow only matching of exact strings.

Pattern options

It’s not explicitly mentioned in the documentation for the entity ruler but you can use the same patterns as described in the token matcher documentation here: https://spacy.io/usage/rule-based-matching#adding-patterns-attributes. Most notably, the REGEX option as described here also works: https://spacy.io/usage/rule-based-matching#regex

So one option for our use case is to specify the token patterns as follows:

pattern_tel = {"label": "TELEPHONE", "pattern": [

{"TEXT": {"REGEX": r"\(?\d{3}\)?"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{3}"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{4}"}}

]}

It has to be noted that this relies on the tokenizer to split the tokens by “-“. So we actually match on 5 tokens here. Another option is to first use a custom component to merge such phone numbers as described here https://spacy.io/usage/rule-based-matching#matcher-pipeline.

Adding the pattern

This one is straightforward:

import spacy

from spacy.pipeline import EntityRuler

pattern_tel = {"label": "TELEPHONE", "pattern": [

{"TEXT": {"REGEX": r"\(?\d{3}\)?"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{3}"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{4}"}}

]}

nlp = spacy.load('en_core_web_sm')

ruler = EntityRuler(nlp)

ruler.add_patterns([pattern_tel, pattern_name, pattern_apple])

nlp.add_pipe(ruler)

doc = nlp("This is Fred and his number is 123-456-7890 to get an apple pie")

print([(ent, ent.label_) for ent in doc.ents])

Output:

[(Fred, 'PERSON'), (123, 'CARDINAL')]Seems there’s something wrong.

Adding entity ruler at the correct position

The documentation states that you have to add the entity ruler before the “ner” component but does not describe how, nor gives a link in this paragraph. So here we go: https://spacy.io/api/language#add_pipe. So let’s do this:

import spacy

from spacy.pipeline import EntityRuler

pattern_tel = {"label": "TELEPHONE", "pattern": [

{"TEXT": {"REGEX": r"\(?\d{3}\)?"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{3}"}},

{"TEXT": "-"},

{"TEXT": {"REGEX": r"\d{4}"}}

]}

nlp = spacy.load('en_core_web_sm')

ruler = EntityRuler(nlp)

ruler.add_patterns([pattern_tel, pattern_name, pattern_apple])

nlp.add_pipe(ruler, before="ner")

doc = nlp("This is Fred and his number is 123-456-7890 to get an apple pie")

print([(ent, ent.label_) for ent in doc.ents])

Output:

[(Fred, 'VIP'), (123-456-7890, 'TELEPHONE')]Summary

We’ve seen how to add a regex based custom rule to the named entity recognition of spaCy. In a subsequent article we will explore more complex patterns.

Recent Comments